An Investigation of Suitable Interactions for 3D Manipulation of Distant Objects Through a Mobile Device Article

Hai-Ning Liang, Cary Williams, Myron Semegen, Wolfgang Stuerzlinger, Pourang Irani

Abstract:

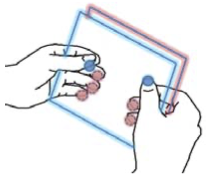

In this paper, we present our research linking two types of technologies that are gaining importance: mobile devices and large displays. Mobile devices now come integrated with a variety of sophisticated sensors, including multi-touch displays, gyroscopes and accelerometers, which allow them to capture rich varieties of gestures and make them ideal to be used as input devices to interact with an external display. In this research we explore what types of sensors are easy and intuitive to use to support people's exploration of 3D objects shown in large displays located at a distance. To conduct our research we have developed a device with two multi-touch displays, one on its front and the other in the back, and motion sensors. We then conducted an exploratory study in which we asked participants to provide interactions that they believe are easy and natural to perform operations with 3D objects. We have aggregated the results and found some common patterns about users' preferred input sensors and common interactions. In this paper we report the findings of the study and describe a new interface whose design is informed by the results.